Abstract

One research direction in the field of assistive technologies aims at identifying the causes that make applications inaccessible. In our work, we follow this direction focusing on the role of developing platforms in the creation of accessible applications. In particular, we investigate how developing platforms support the developers to easily and conveniently create accessible applications. In this ongoing research, we have so far considered mobile developing platforms and their accessibility to screen-reader users. We present here two of the results we achieved so far.

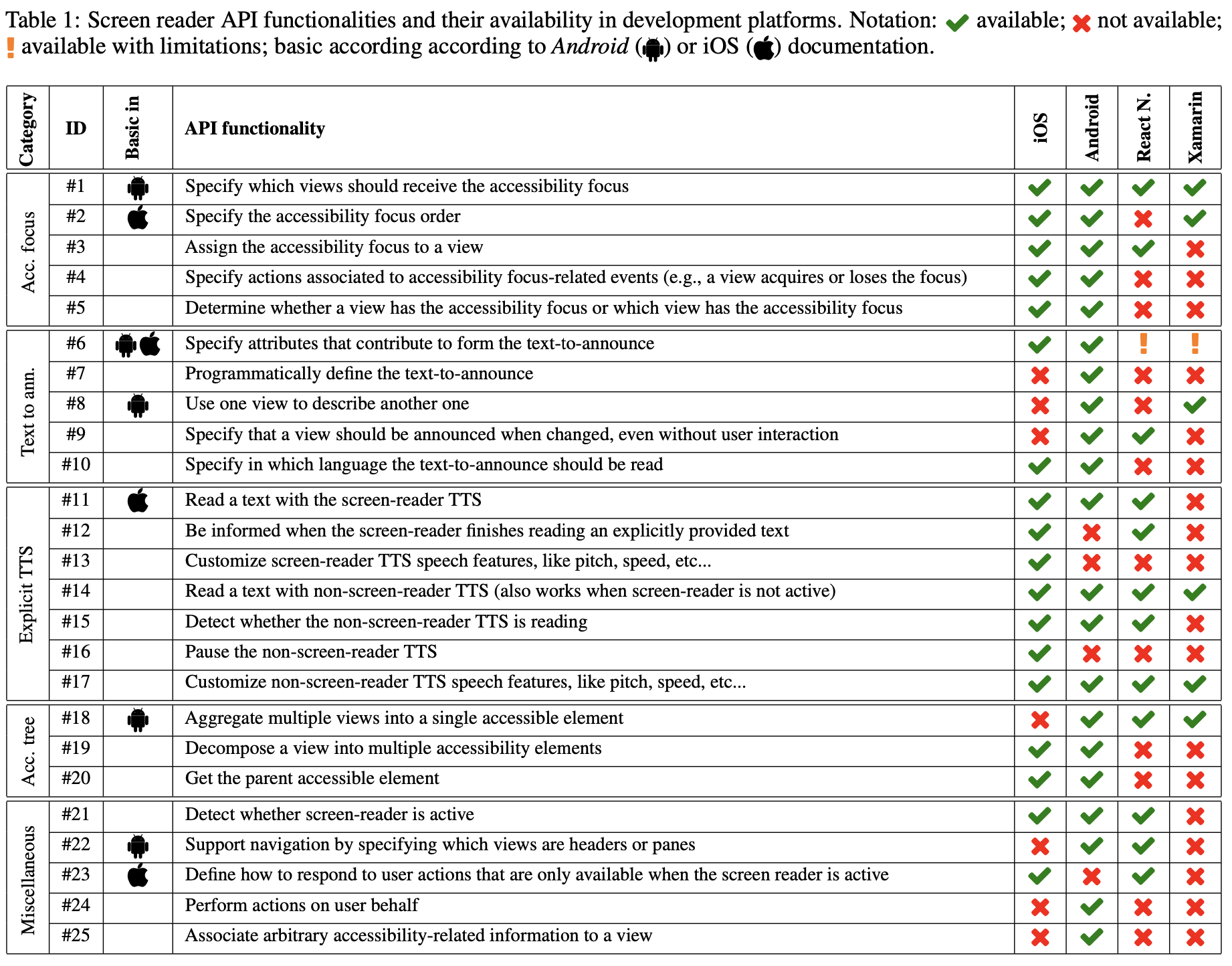

- We systematically analyzed screen-reader APIs available in native iOS and Android, and we examined whether and at what level the same functionalities are available in two popular Cross-Platform Developing Frameworks (CPDF): Xamarin and React Native. This analysis unveils that there are many functionalities shared between native iOS and Android APIs, but most of them are not available in React Native or Xamarin. In particular, not even all basic APIs are exposed by the examined CPDF.

-

Accessing the unavailable APIs is still possible, but it requires an additional effort by the developers who need to know native APIs and to write platform-specific code, hence partially negating the advantages of CPDF. To address this problem, we consider a representative set of native APIs that cannot be directly accessed from React Native and Xamarin and show sample implementations for accessing them.

Screen-reader related API availability in native platforms

Screen readers can render any application accessible, also those developed by a third party. In some cases, an application can be (at least partially) accessible through a screen reader even though the application developer does not take explicit actions to enable screen reader accessibility. However, creating fully accessible apps often requires intervention from the developers. For example, the developer has to specify the alternative text for images so that the screen reader can read them aloud. This form of intervention can be achieved through screen reader APIs that developers can use to interact with the screen-reader or to personalize its behavior.

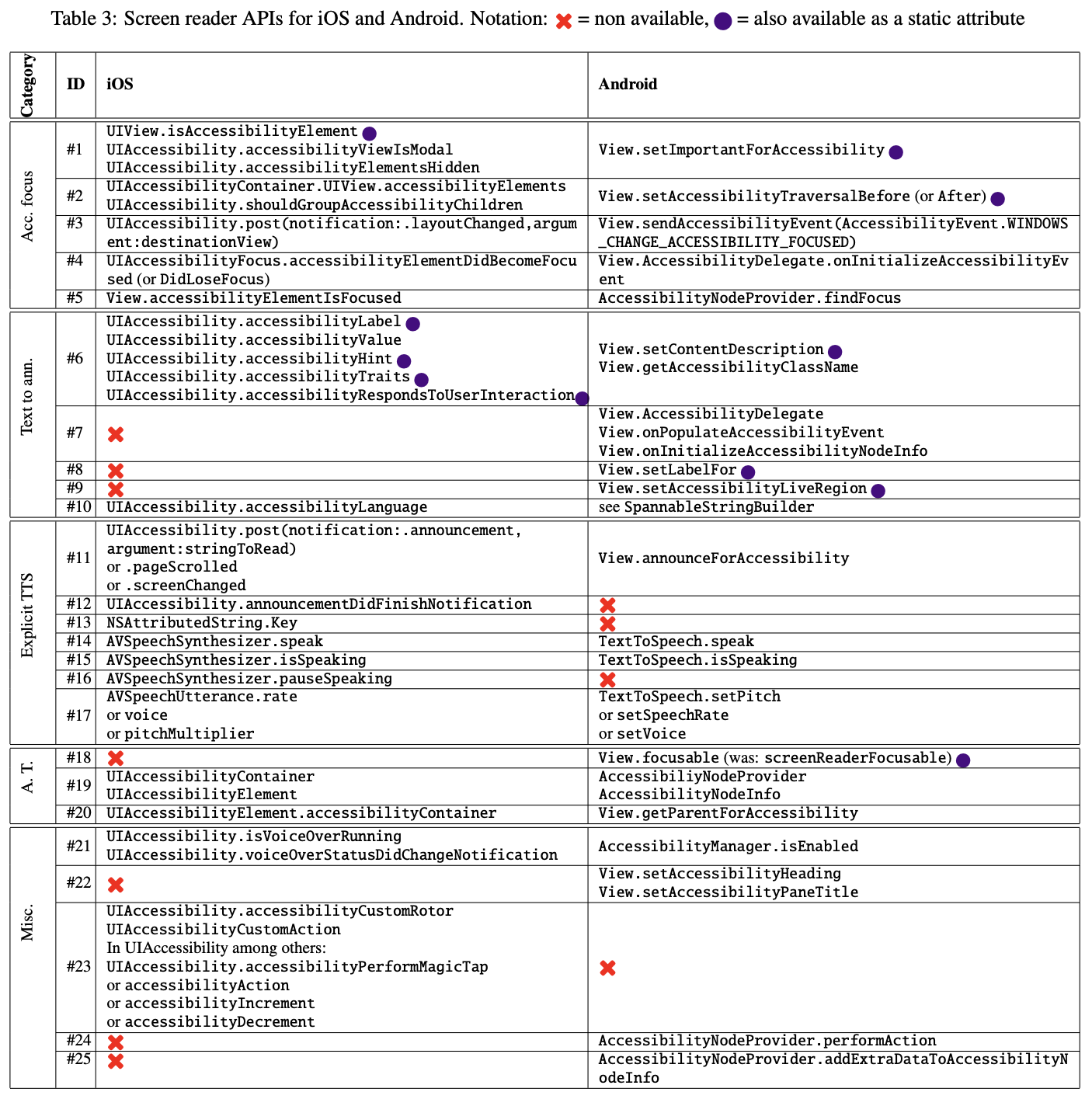

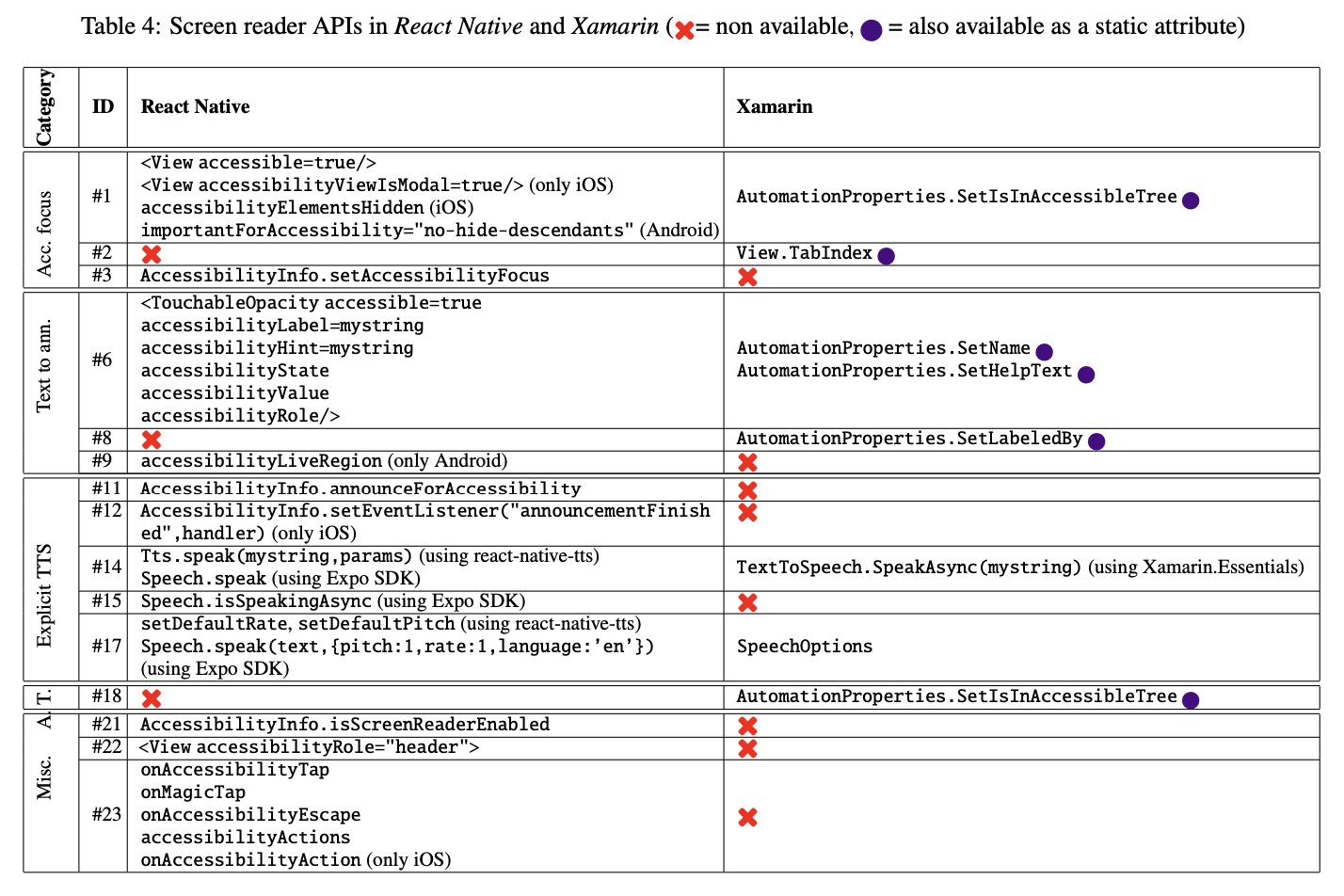

We conducted an analysis of screen reader APIs by considering the development documentation for iOS and Android platforms and by experimenting with the actual implementation. We created a taxonomy of the identified APIs based on the conceptual functionality they expose to the developer. The result of this analysis is shown in Table 1, which lists the 25 identified API functionalities and their availability in the four considered platforms (native iOS, native Android, React Native, Xamarin). Tables 3 and 4 (available at the bottom of this page) report additional information on how each API is implemented.

Table 1 also indicates which APIs implement basic screen-reader functionalities. To classify basic functionalities we took into account the accessibility principles and best practices presented in introductory accessibility documentation by Apple and Google. Then, we considered the APIs mentioned in these resources as the basic ones.

CPDF screen reader API availability

The last two columns of Table 1 report whether each functionality is available in React Native or Xamarin, respectively. We can observe that in most cases (14 out of 25), the same functionality is available on both iOS and Android. In all of these cases, it would be possible for CPDF to wrap native APIs into a single cross-platform API, so that the developers can easily access the native APIs for that functionality. In practice, however, this is not the case in both the examined CPDF and, in particular in Xamarin. Indeed, out of the 14 functionalities shared by iOS and Android, only 8 and 5 are wrapped into React Native and Xamarin APIs, respectively. For the APIs that are exposed by only one native platform, it could still be possible for CPDF to wrap the API for that platform. Again, this is only rarely the case. Indeed, out of 11 API exposed by iOS or Android (but not both), only 5 and 2 are wrapped by React Native and Xamarin, respectively. The situation is only slightly better considering basic functionalities only. Indeed, out of 8 basic functionalities, only 6 and 5 are available in React Native and Xamarin, respectively.

Implementation of accessibility functionalities

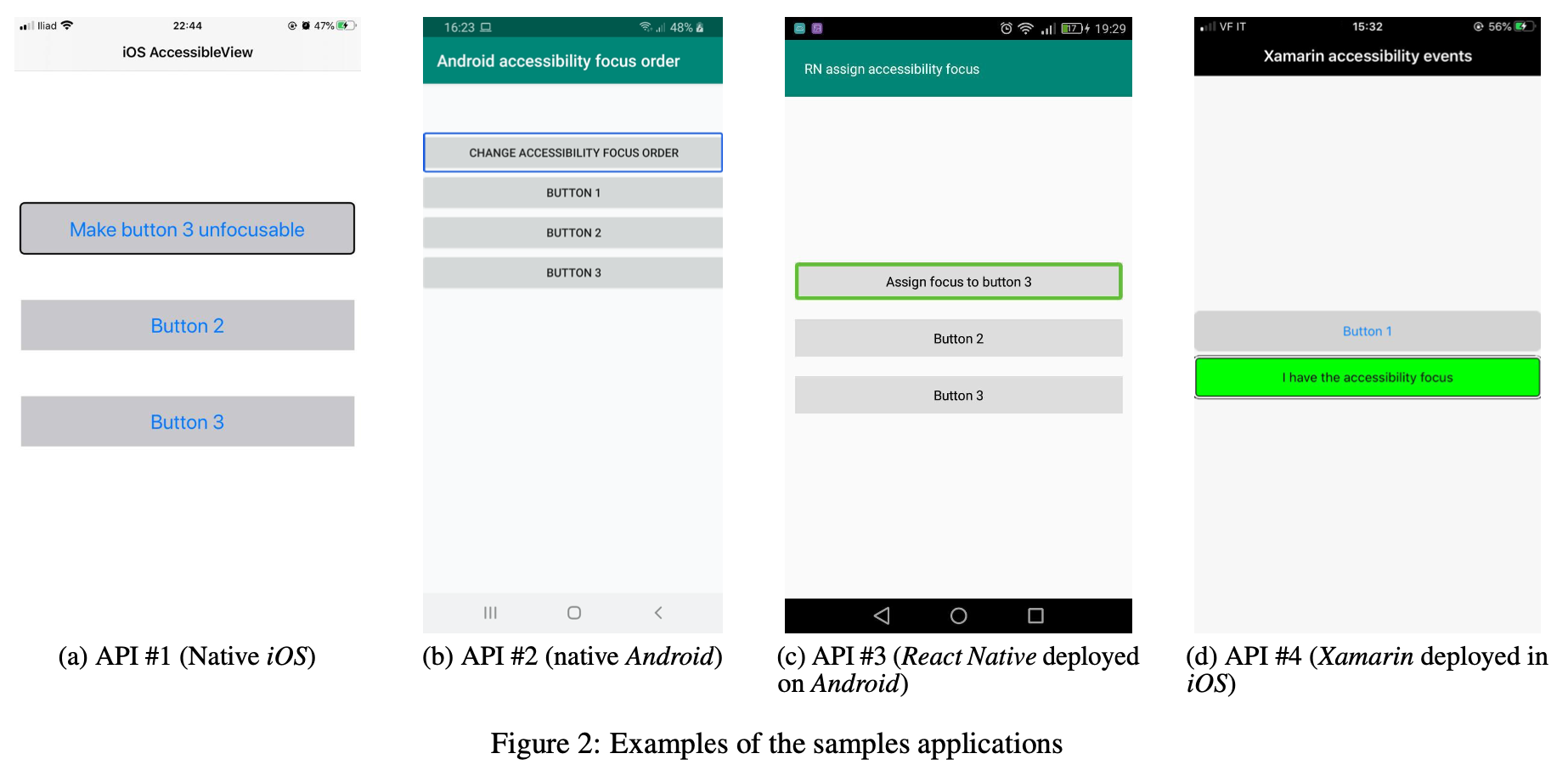

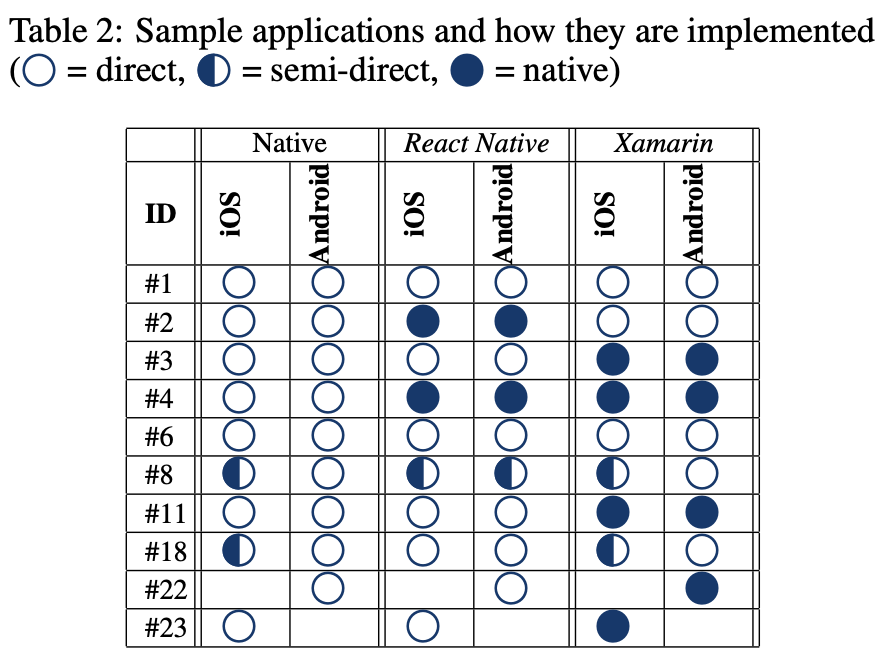

We selected a subset of the APIs from Table 1 (including all basic functionalities) and, for each of them, we designed a sample app, which we then implemented for the considered platforms (iOS, Android, React Native and Xamarin). The source code is available online.

Figure 2 shows some examples. In particular, Figure 2a shows the sample app for API #1 implemented in native iOS: the app shows three buttons and initially they can all receive the accessibility focus; activating the first button results in the third button to become not focusable any more. Figure 2a shows the sample app for API #2 implemented in native Android: the app shows four buttons. Initially, the accessibility focus order is the same as the visual order but when the first button is activated the accessibility focus order is changed. The third example (see Figure 2a) is the sample app for API #3 implemented in React Native and deployed to Android. The app shows three buttons: when the first one is activated, the accessibility focus is assigned to Button 3. Figure 2d shows the sample app for API 4 implemented in Xamarin and deployed to iOS. The app shows two buttons: when the second one receives the accessibility focus, it changes its color and label.

As detailed in Table 2, we implemented each sample app in native code (iOS and Android) and in the two considered CPDFs, each being deployable both in iOS and Android. In few cases, the implementation is missing (denoted with an empty cell in Table 2) because it is not supported by the given native platform and it is not possible to implement it using a combination of other APIs (e.g., the rotor is not available in Android). We classify the sample apps depending on how the accessibility functionality is implemented:

- Direct API use: the accessibility functionality is implemented directly using the API exposed by the platform;

- Semi-direct API use: the accessibility functionality is not exposed by the platform API, but a similar behavior can still be achieved by using a combination of APIs exposed by the platform;

- Native API use (for applications developed in CPDF only): APIs are not exposed by the platform and the implementation requires to access native APIs

Showcase app

We are currently developing various showcase apps, implemented in native and CPDFs, to show the implementation of screen-reader APIs.

| CPDF | iOS | Android | Source code |

| ReactNative | Not yet available | Available | |

| Xamarin | |

Available | |

| Flutter | Not yet available |

Current and future work

- Since the CPDFs considered in our work are open-source, we intend to suggest changes in the CDPFs to address the problems we identified.

- We intend to consider other CPDFs, in particular, Flutter.

- We intend to investigate APIs for assistive technologies other than screen-readers

- We intend to adopt the same methodology for traditional (i.e., non mobile) developing platforms.

Bibliography

- Sergio Mascetti and Mattia Ducci and Niccoló Cantù and Paolo Pecis and Dragan Ahmetovic. Developing Accessible Mobile Applications with Cross-Platform Development Frameworks. Technical Report, 2020. arXiv:2005.06875v1